I can say that I really enjoy spending Sundays reading and watching different things that completely scatter my mind in every direction. Sometimes it can feel exhausting, but in the end, the awareness that comes from it can leave you finishing the week on a happy note.

That is why, as the title already suggests, I find it striking when major technology and AI companies announce that they are hiring philosophers, or when philosophers themselves publicly share that they have joined these companies.

At a time when so much of the conversation is about AI becoming highly intelligent, perhaps even surpassing human intelligence one day, or reaching something close to professor-level capability, it is interesting to see philosophers becoming more visibly part of that world as well. Of course, the philosophers being mentioned here do not all have the same role. But even so, the trend itself is noteworthy.

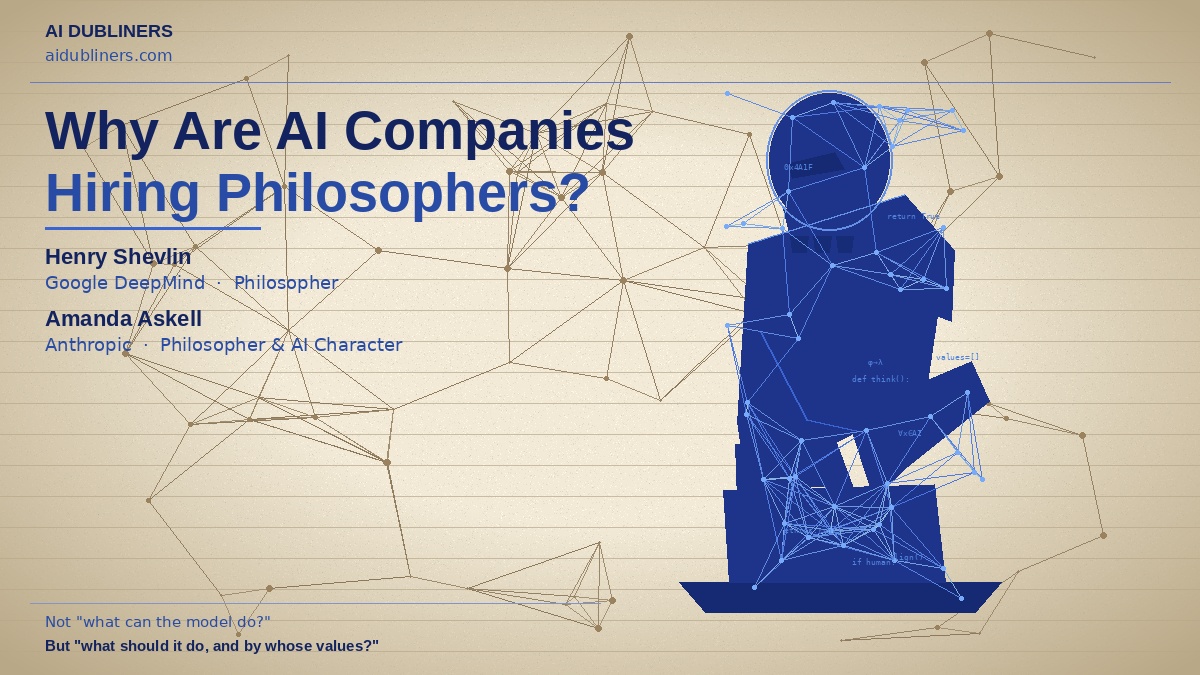

One of the developments that became visible in April 2026 was Henry Shevlin joining Google DeepMind with the title of “Philosopher.” Shevlin is a Cambridge-linked thinker whose work has focused on topics such as machine consciousness, human-AI relationships, and the moral status of artificial systems. DeepMind’s official careers page also clearly shows that the company works with philosophers on the responsibility and safety side. I have not seen a detailed official blog post from the company specifically about this appointment, but public reporting and DeepMind’s own pages support the direction.

On the Anthropic side, Amanda Askell’s role is easier to verify. In Anthropic’s official materials, Askell is clearly described as leading work on Claude’s character and as the lead author of Claude’s Constitution. In other words, philosophers, or people working in a deeply philosophical way, are not just commenting from the outside. They are directly involved in some of the most fundamental questions about how a model should behave, which principles should shape it, and how it should relate to people.

To me, this is the central point:

Major AI companies are not hiring philosophers for ethical window dressing. They are doing so because AI is no longer only an engineering problem. It has also become a question of values, judgment, oversight, consciousness, responsibility, and human-AI relationships.

Put differently, AI companies are not hiring philosophers because AI is becoming “smarter.” They are hiring them because AI is becoming more powerful, more autonomous, and more social. The real question is no longer just “What can the model do?” The real question is: “What should it do, what should it not do, and according to whose values should it behave?”

I think philosophers have at least five core roles here:

- Defining values: What does it actually mean for a model to be “good,” “harmless,” “honest,” or “fair”?

- Alignment: By which principles do we translate human intention into a technical system?

- Consciousness and moral status: If systems become increasingly human-like, how should we think about them?

- Human-AI relationships: How should trust, persuasion, dependency, and perceptions of authority be managed?

- Governance and responsibility: When something goes wrong, who is accountable and where should the boundaries be drawn?

That is why I do not see philosophers entering AI companies as a small detail. To me, it is one of the signs that the sector is starting to reach its own limits. Because as technology advances, the challenge is no longer only about building systems. It is also about thinking carefully about what kind of world those systems are serving.

It may well be that in the coming years we will see more philosophers, ethicists, social scientists, and researchers focused on human behaviour inside AI companies. Because the future of AI will not be defined only by model capability, but by the framework through which that capability is directed.

For those who want to explore the topic in more depth, the following sources are worth looking at:

- Google DeepMind Careers

DeepMind explicitly shows that it works with philosophers in responsibility and safety roles. - Google DeepMind Responsibility & Safety

The company’s framework for developing AI safely and responsibly. - Anthropic: Claude’s Constitution

A foundational text, led by Amanda Askell, explaining the principles shaping Claude. - Anthropic: Constitutional AI

One of the most important works on how human values can be translated into AI systems. - Anthropic: Claude’s Character

Opens up the discussion beyond harmlessness to character and behavioural style. - Anthropic: Collective Constitutional AI

Shows how values can be shaped not only internally, but also through public input. - Henry Shevlin: How Could We Know When a Robot Was a Moral Patient?

An important framework for thinking about when a machine might deserve moral consideration. - Henry Shevlin: Consciousness, Machines, and Moral Status

Directly relevant to debates around AI consciousness and moral status. - Murray Shanahan / DeepMind: Simulacra as Conscious Exotica

Examines the tendency to attribute consciousness to AI systems that sound human. - DeepMind: A Pragmatic View of AI Personhood

Takes a more practical and governance-oriented approach to the question of AI personhood.

At AI Dubliners, we do not only follow companies. We also follow what companies are building with AI, which areas these technologies are touching, and how this transformation is reshaping culture, work, and society.

Because the story is not only about technology. The story is about the ideas through which technology enters human life.

And it increasingly seems that in the future of AI, philosophers will have just as much to say as engineers.

Sources

- Google DeepMind Careers

- Google DeepMind Responsibility & Safety

- Claude’s Constitution

- Constitutional AI: Harmlessness from AI Feedback

- Claude’s Character

- Collective Constitutional AI

- How Could We Know When a Robot Was a Moral Patient?

- Consciousness, Machines, and Moral Status

- Simulacra as Conscious Exotica

- A Pragmatic View of AI Personhood